SEARCH

Categories

Tags

Links

Archives

- September 2015

- July 2013

- May 2013

- September 2011

- January 2011

- December 2010

- November 2010

- September 2010

- August 2010

- July 2010

- May 2010

- April 2010

- March 2010

- December 2009

- November 2009

- October 2009

- September 2009

- August 2009

- June 2009

- May 2009

- April 2009

- March 2009

- February 2009

- January 2009

- December 2008

- November 2008

Contours at EwE

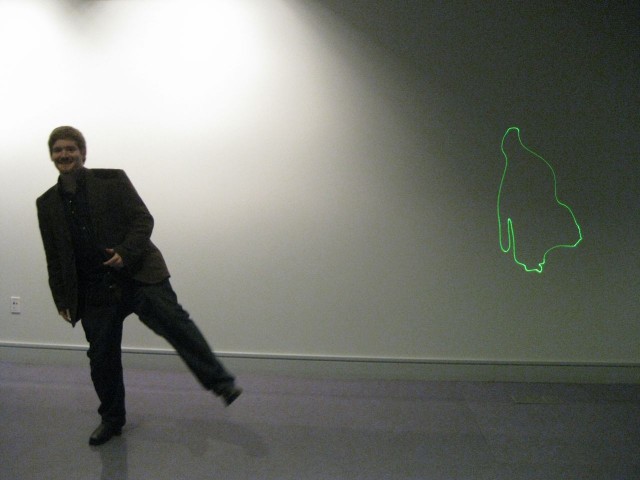

My interactive laser project, Contours, was featured as an installation outside the concert hall at Everybody Wants Everything, the 2009 MAT End-of-year show. The installation consisted of both laser and traditional video projection driven by a webcam. In addition to the live laser outline the system also periodically sampled and accumulated outlines to display history at three levels of time granularity.

See below for a more detailed look at how the project was created.

Contours consists of two custom applications utilizing a variety of platforms and modes of digital transport.

System Input

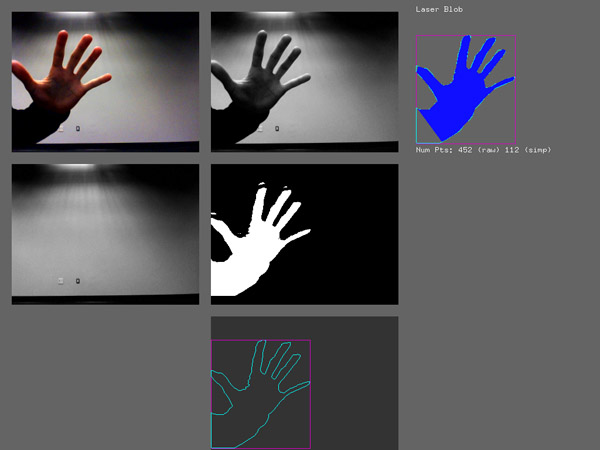

A firewire camera is connected to a computer vision application written in C++ utilizing openFrameworks, a framework for multimedia applications similar to Processing. OpenCV computer vision library is used to perform blob detection which creates an array of XY points corresponding to the outline of the figure in front of the camera.

Detection efficacy is increased by installing the camera opposite a plain white wall. The wall is also brightly and evenly lit so the subjects appear to the camera as a silhouette which further enhances the quality of the resultant blob. In the event that multiple blobs are found, the largest one by area is selected since it is most likely to be a person or object of interest.

The blob is simplified using the Ramer-Douglas-Peucker algorithm which reduces the number of points needed without modifying the overall appearance. This reduces transmission bandwidth needed to increase system performance. In the example below (from early development) the original blob (cyan) had 452 points while the simplified one (solid blue) only needed 112 points and looks nearly identical.

The blob is transmitted to the Laser program via Open Sound Control as a stream of XY points.

System Output

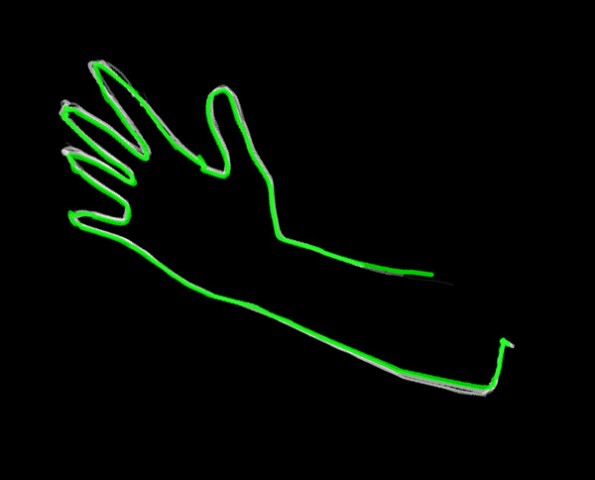

The Laser program is written in Java utilizing the Processing framework for visual feedback. This program receives laser coordinate and color data via Open Sound Control and sends it to the Laser via USB.

The Laser is a ProLaser FX Proshow II previously purchased by the Professor George Legrady for a different project. It has a Red and a Green laser which can combine to also create Yellow. For this project I used only the Green beam as it was very bright. The Laser is rated at 5mW which is a little more powerful than a typical laser pointer but not significantly more dangerous.

The Laser program also manages the historical visual feedback, using a standard VGA Projector.

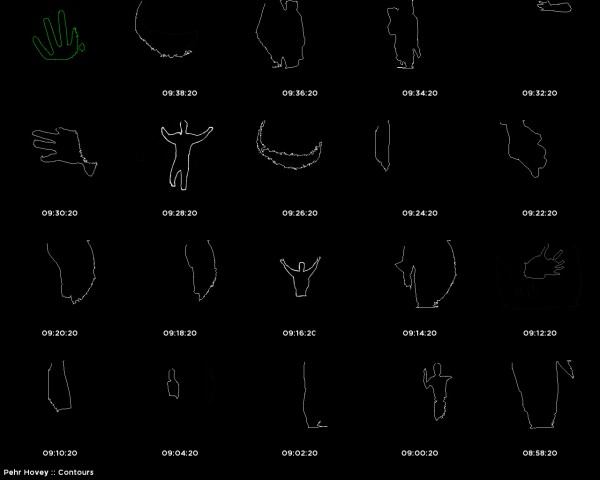

Contour history is displayed in three modes corresponding to differing timescales: Immediate, Timeline (short-term), Grid (long-term). The current blob is displayed in green to match the laser while historical blobs are all in gray. The program automatically switches between the three display modes to keep the display lively, especially in the absence of new camera input if there are no participants nearby.

The Immediate display shows the five most recent contours in full scale. Depending on how fast the subject moves you can see trails as the shape changes.

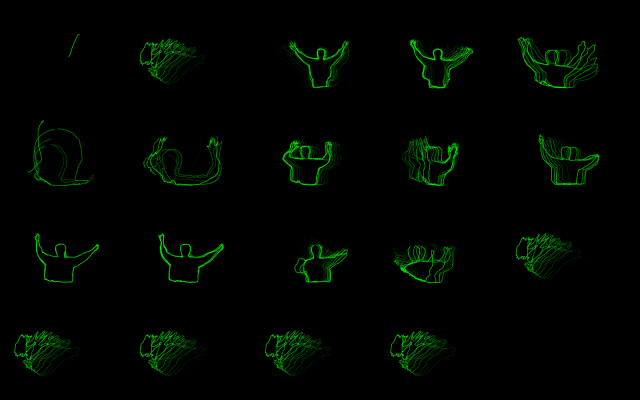

The Timeline shows the current laser blob arranged on a horizontal line along with past contours sampled every thirty seconds. With the short timescale these contours all tend to correspond to the same person.

The Grid shows the live contours along with contours sampled every two minutes. A filled grid shows about half an hour of contour data with each set of contours typically corresponding to a different person.

Each contour sampling is a sequence of several contours that play back as a mini animation to depict both the shape of the contour and the change over time. Each grouping represents a few seconds of contours.

As different people interact with the system in different ways the grid fills out with a variety of movement and forms.

Contours was developed for MAT 594CP: Open Studio in Optical-Computational Processes supervised by Professor George Legrady and Andres Burbano. The course webpage has more information on this and other peoples’ projects.